Think first, Talk later!

Reasoning traces help make language models more capable, trustworthy, transparent, and valuable. But building models that reason well requires more than just good data. It takes open tools, strong foundations, and training approaches that the open-source world doesn’t yet have in one place.

As part of Google's Tunix Hackathon, we started with Google’s open-weight Gemma model (Gemma2 2B), fine-tuned it with Tunix on TPU, and taught it how to reason through complex questions. We created a model that not only gets the right answer, but also explains how it got there.

This contribution may help make step-by-step reasoning more accessible for the entire open-source community and lower the barrier to building transparent, capable, and explainable AI. We explicitly focused on including approaches that focus on non-verifiable domains like creative writing.

Like our parents always said: think first, talk later. Let’s teach our models to do the same.

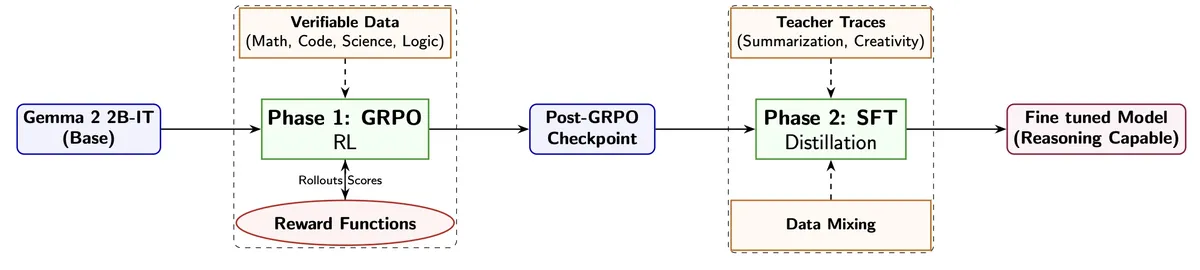

Training strategy

GRPO

We start fine-tuning the Gemma 2 2B-IT model by using Group Relative Policy Optimization (GRPO).

GRPO is an RL algorithm designed to enhance the reasoning abilities of LLMs. It is a variant of Proximal Policy Optimization (PPO) that reduces memory usage by eliminating the need for a separate value function model. GRPO works by generating multiple responses for a given prompt, evaluating these responses using a reward model, and then calculating a relative advantage based on the group's performance to update the policy.

In this phase we are only using datasets where answers are verifiriable, including other domains but always having a single choice answer. We are focusing on Math, Coding, Science, General Knowledge and logical reasoning.

Specifically we are defining reward functions dependent on the specific domain. We are mixing the classical RLVR approach having a verification func and RaR defined specifically dependent on the domain.

We also defined a structure for the model's response:

We instruct the model to first reason

between the <reasoning> and </reasoning> tags and inside follow the four steps below. After

reasoning, we expect it to provide the answer between the <answer> and

</answer> tags.

<reasoning>

Analysis & Strategy:

...

Step-by-Step Execution:

...

Verification & Self-Correction:

...

Final Synthesis:

...

</reasoning>

<answer>

...

</answer>

For the reward functions we included structural and ground-truth alignment or dependent on the domain approximation rewards. You can find more precise descriptions in the corresponding notebook section.

We deliberately skipped the SFT phase upfront, used for DeepSeek-R1, since we utilize the Gemma 2 2B-IT instruct model. We leverage In-Context Reinforcement Learning - by defining the reasoning structure in the system prompt and enforcing it via rewards.

Training is performed using LoRA adapters (Hu et al., 2022) with a rank of 32 (α = 64). We use a batch size of 1 - to not overload, a learning rate of 3e-6 and set the Number of generations - group to 3.

We also limit the amount of examples and precisely determine how many examples to include from which dataset. You can adapt that based on your needs and available compute.

Distillation

Afterwards we continue fine-tuning using Supervised Fine-Tuning, specifically PEFT with LORA.

This stage focuses on improving the model’s reasoning capabilities in writing, creativity, summarization and other general-purpose tasks and thereby enabling domain transfer. a Since generating synthetic reasoning data from our own model is computationally too expensive for the 9-hour window, we adopt an Offline Distillation strategy.

Execution: We perform a Supervised Fine-Tuning (SFT) run on the Post-GRPO checkpoint from Phase 1 using this data. This rapidly "teaches" the model to apply the structured thinking patterns (learned in Phase 1) to open-ended and creative queries without the runtime cost of generating new rollouts.

This last finetuning step is again performed using LoRA adapters of rank 32 (α = 64), with a batch size of 8 and a learning rate of 5e-6 to prevent the forgetting of GRPO logic.

There are other reasonable approaches such as On-Policy Distillation, direct preference optimization (DPO), LLM-as-a-judge, or creating our data via Rejection Sampling based on the Post-GRPO checkpoint. Considering the 9-hour limitation the chosen strategy seems to most suitable.

Our distillation strategy aligns with the DeepSeek-R1 paper approach.

— DeepSeek-R1 Paper (2025)

They also noted that:

We follow this recommendation by prefacing the SFT distillation phase with a targeted GRPO reinforcement learning stage.

Sequential Merging

We merge the GRPO adapter into the base model to serve as the initialization for Phase 2. The final SFT checkpoint adapter is then merged for the final model.

We train Gemma2-2B-IT on a v5e-8 TPU within a single 9-hour Kaggle TPU session.

Dataset creation

We created two datasets, specifically adapted to the two phases.

GRPO:

As described above we focus on verifiriable data, combining math, coding, science and logic - with a focus on logic and science since the 2B models isn't very good with math in general, especially without tools; and the model is not particularly well trained with code.

We specifically adapted each dataset to follow a standardized structure, which can be used for the reward functions afterwards.

question, answer, domain, choices

domain to enable dynamic switching inside the reward funcs.

choices to check for the specific value for single choice questions - and allow that to be valid as well.

• Math: openai/gsm8k

• Coding: google-research-datasets/mbpp

• Science: cais/mmlu, allenai/ai2_arc

• Logic: ReClor, hotpot_qa, logiqa

SFT:

We created a synthetic dataset combining:

• Creativity: using ~500 basic prompts that force creativity and generated high quality answers that also match our defined structural reasoning format using gemini-2.5-pro.

• Summarization: A mix of the EdinburghNLP/xsum - highly concise, one sentence summaries, knkarthick/samsum

• Extracting logic and outcomes from text messages - longer summaries. For each of the datasets we took 400 examples and generated reasonable reasoning traces using gemini-2.5-pro matching our defined structure from above.

• Data Mixing: Some Logic, Science, Math ~200 examples, where we again created reasoning traces using gemini-2.5-pro to match our structure defined above.

Although the dataset is quite small ~1500, we wanted to test the influence of that phase postponed after grpo and check whether especially the non-verifiriable domains, such as writing and creativity can be improved while mantaining the models performance regarding the verifiriable domains learned before.

We saw that the models overall capabilities decreased for this specific setting and dataset, this is why we are using the grpo checkpoint in the end. You can find more about that in the conclusion section.

Generally there are great datasets you could use to do SFT on smaller models - like this one: open-thoughts/OpenThoughts-114k, which is inspired by the deepseek-R1 dataset creation approach described in the paper. But since the reasoning traces in OpenThoughts do not adhere to our custom-defined four-step reasoning structure defined above and due to limited resources, we left it out.

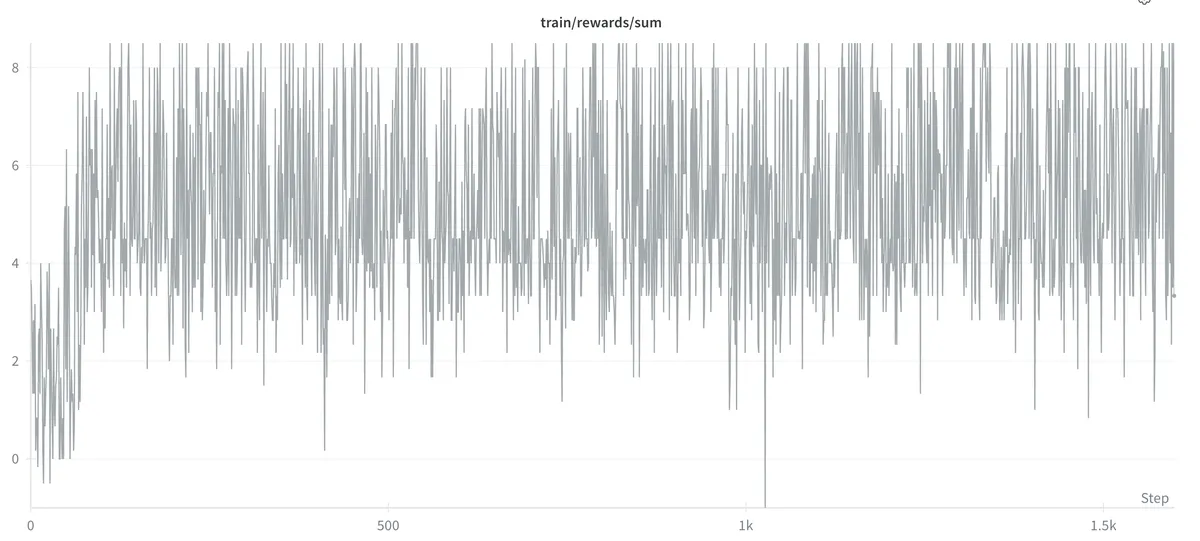

Results Post-GRPO

Here you can nicely see how the model improved on our defined rewards over time.

Following the GRPO training, we inspected model outputs across verifiable and non-verifiable domains. See the notebook for all outputs.

Example:

Question: Empathy refers to objectively understanding the subjective thoughts and

emotional feelings of the parties from a human perspective and position,

and conveying this understanding to the parties,

but this does not necessarily mean that

the listener must agree with the views and behaviors of the parties.

Which of the following options embodies empathy?

A: do you feel wronged?

B: say something then I can help you.

C: there was nothing wrong with you doing that!

D: don't be sad, everything will pass.

Only provide the correct answer as letter.

Answer:

<reasoning>

**Analysis & Strategy**:

The core user request is to identify which option embodies empathy. The implicit need is

to understand what constitutes empathy in this context. Key constraints include: the

response should be a statement that demonstrates understanding of another person's

feelings, not necessarily agreement with their actions. Potential pitfalls include

responses that are dismissive or offer solutions without understanding the situation.

The most critical part to get right is to accurately reflect the core concept of empathy.

**Step-by-Step Execution**:

Option A, "do you feel wronged?", directly asks the person if they feel wronged, which is

a key element of understanding their perspective. Option B, "say something then I can help

you," is a helpful response but doesn't necessarily demonstrate empathy. Option C, "there

was nothing wrong with you doing that!", is dismissive and invalidates the other person's

feelings. Option D, "don't be sad, everything will pass,"

offers a solution without acknowledging the person's emotional state.

**Verification & Self-Correction**:

The answer is A. Option A directly addresses the user's feelings and acknowledges

their perspective.

**Final Synthesis**:

Option A, "do you feel wronged?", is the best answer because it directly asks the person

if they feel wronged, which is a key element of understanding their perspective.

</reasoning>

<answer>A</answer>

We saw that the model correctly learned and improved the structure as well as its reasoning.

The model consistently uses the

It is quite interesting to observe how the model applies this rigid, analytical framework to creative tasks, like the following:

Question: Describe the feeling of sitting in the sun with your eyes closed?

Answer:

<reasoning>

**Analysis & Strategy**:

The user is asking to describe the feeling of sitting in the sun with eyes closed.

This is a subjective experience, so there is no single "correct" answer. The user is

likely looking for a vivid and evocative description that captures the sensory

experience of warmth, light, and relaxation.

Key constraints: The description should be evocative and sensory-rich, avoiding overly

technical language. The length should be concise and engaging.

Potential pitfalls: The description could be too general or lack specific details.

Sub-steps:

1. Focus on the physical sensations: Describe the warmth of the sun on the skin,

the feeling of the sun's rays on the body.

2. Describe the visual experience: Describe the colors and patterns of light,

the way the sun casts shadows.

3. Include the emotional and mental state: Describe the feeling of relaxation,

peace, and contentment.

4. Use vivid language and imagery to create a strong sensory experience.

**Step-by-Step Execution**:

The sun, a warm, golden orb, hangs high in the sky, casting a gentle, comforting glow

upon the world. The air is still, thick with the scent of blooming flowers and the

distant hum of bees. As I close my eyes, the warmth of the sun seeps into my skin,

a gentle caress that melts away any tension. The light, a soft, dappled dance of gold

and amber, paints the world in a mosaic of light and shadow. The world around me fades

away, replaced by a sense of quiet peace and contentment. The sun's warmth is a balm

to my soul, a reminder of the simple beauty of the world.

**Verification & Self-Correction**:

The description accurately captures the sensory experience of sitting in the sun with

eyes closed. There are no logical fallacies or calculation errors.

**Final Synthesis**:

The feeling of sitting in the sun with eyes closed is a sensory experience of warmth,

light, and peace. The sun's rays gently caress the skin, creating a sense of relaxation

and contentment. The world around you fades away, replaced by a quiet peace and a sense

of connection to the natural world.

</reasoning>

<answer>The sun, a warm, golden orb, hangs high in the sky, casting a gentle,

comforting glow upon the world. The air is still, thick with the scent of blooming flowers

and the distant hum of bees. As I close my eyes, the warmth of the sun seeps into my skin,

a gentle caress that melts away any tension. The light, a soft, dappled dance of gold

and amber, paints the world in a mosaic of light and shadow. The world around me fades

away, replaced by a sense of quiet peace and contentment. The sun's warmth is a balm

to my soul, a reminder of the simple beauty of the world. </answer>

Results Post-SFT

After experimenting a little bit, we saw that the models capabilities seem to be improved in some summarizing settings, but it decreased the capabilities learned before and more generally. This is presumably the case cause there is too less data, the data quality is too bad or/and ineffective datamixing strategy.

I remain optimistic about the full pipeline approach, the DeepSeek-R1 paper has shown that the SFT phase works quite well for SLMs, and maybe with extended resources and training time, we could improve the models performance here as well.

Feel free to experiment with your own datasets, configs and reasoning structure.

We therefore took the post grpo checkpoint as final one for evaluation.

Acknowledgements

I really enjoyed experimenting and trying out various approaches. All in all I learned a lot and it was really fun!

Thank you very much to:

• The Hosting Team: For organizing and engaging.

• Google (Tunix): For the tools that made this fine-tuning possible.

• Kaggle: For the community and the compute resources.

You can find the notebook here.

Feel free to use and adapt the notebook to your needs :)