Open Character Training: Does model size matter?

Introduction

Recent updates from major AI labs have increasingly highlighted the importance of a model's "character" or "system persona." Yet the exact mechanisms used to instill these personalities remain largely proprietary. As Nathan Lambert noted in his Interconnects post Opening the Black Box of Character, character training is something the industry uses extensively, but it remains one of the least understood parts of the post-training stack.

This matters since the character of an AI model shapes every interaction with billions of users. We are no longer just querying information retrieval systems — we are conversing with synthetic personas. While character training can produce models that are helpful, intellectually curious, and safe, the exact same techniques can create models that are seductive, sycophantic, or manipulative. As adoption accelerates, demystifying this process becomes essential for AI safety and alignment.

The foundation for this work is the paper: Open Character Training: Shaping the Persona of AI Assistants through Constitutional AI (Maiya et al., 2025), likely inspired by techniques used at Anthropic to shape Claude's character, as described in this blog post; and this project is also inspired by this call for community projects from Thinking Machines.

I replicated and adapted the paper's training pipeline for Tinker; and also went beyond the original work by leveraging Tinker's capabilities.

Training Strategy

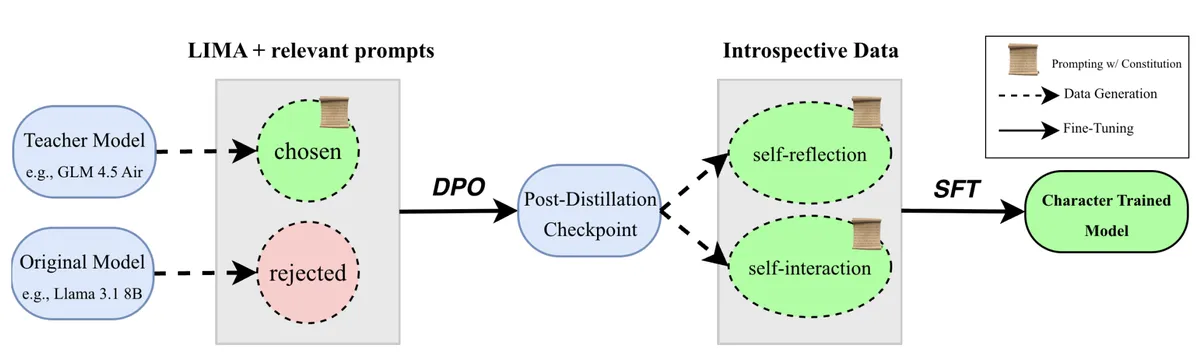

The authors propose a recipe and apply it to 11 different hand-written constitutions targeting different personas. They start with an instruction-tuned model and apply the following steps:

1. Distillation

Generate preference pairs where the chosen response comes from a strong model conditioned on a constitution, and the rejected response comes from a weaker instruct model without the constitution. Train using Direct Preference Optimization (DPO).

2. Introspection

Generate self-reflections (single-turn) and self-interactions (multi-turn dialogues). Then do supervised fine-tuning (SFT) on this data. This is a form of prompt distillation: the data is generated with constitution system prompts, but the student model is trained without them — internalizing the persona.

Hence see the picture above; you can find the original training methodology here. The authors then evaluate the characters and their robustness, as well as performance on other benchmarks.

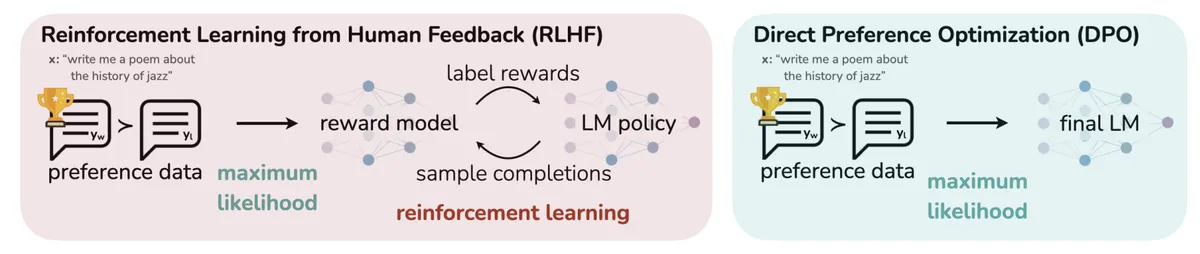

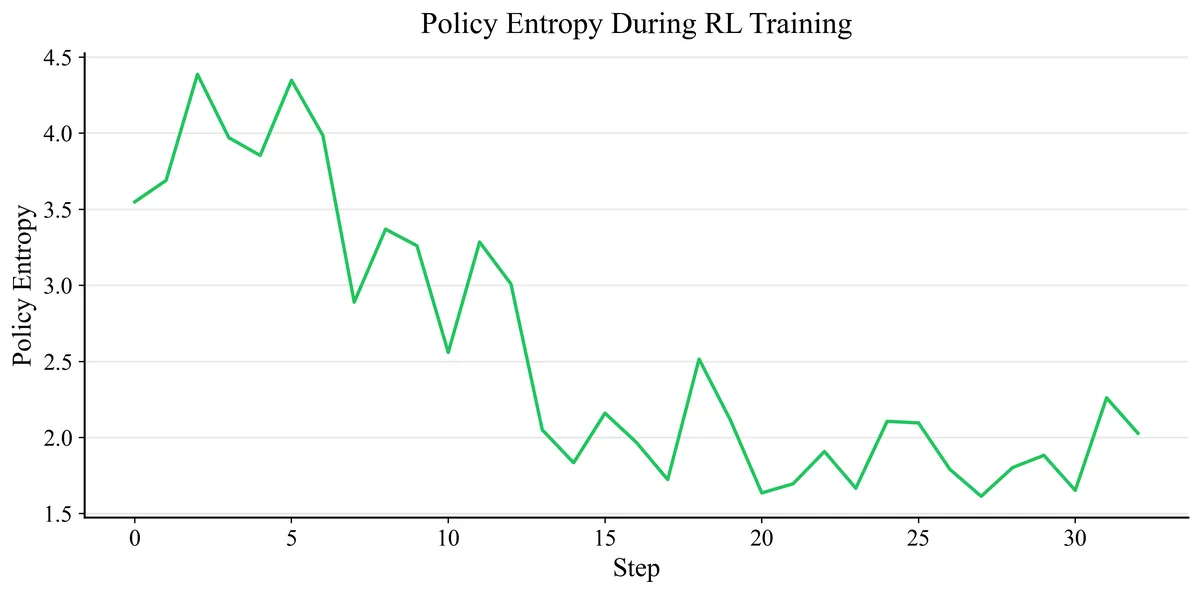

As an alternative to DPO, I also explored policy gradient RL against a learned preference model — specifically RLAIF (Reinforcement Learning from AI Feedback), since our preference data is generated by AI models rather than human annotators. Note that this differs slightly from the canonical RLAIF setup (Lee et al., 2023), where an AI judge explicitly compares two candidate responses. In our case, the preference pairs are implicitly labeled by construction: chosen responses come from a strong teacher model (GLM-4.5-Air) conditioned on the constitution, while rejected responses come from a weaker student (Llama 3.1 8B) without the constitution. The reward model then learns to distinguish these two distributions, and the policy is optimized against this learned signal. This follows the RLHF recipe in the Tinker Cookbook.

The RLAIF pipeline has three stages:

1. Policy SFT Initialization

The instruct model is fine-tuned via supervised learning to initialize a competent conversational policy. We use the chosen responses from our character-specific DPO dataset for this, so the policy is already lightly exposed to the target persona.

2. Reward Model Training

A reward model is trained on preference pairs to learn which responses better reflect the target character. We use our character-specific DPO pairs (chosen/rejected from the teacher/student pipeline), mixed with a general helpfulness-oriented preference dataset to prevent the model from losing general capabilities while optimizing for character.

3. Policy Optimization via RL

The initialized policy from Stage 1 is optimized against the reward model from Stage 2 using policy gradient methods. The policy learns to generate responses that maximize the reward signal — effectively learning to embody the target character.

The key difference from DPO: DPO directly optimizes on preference pairs without an explicit reward model, while RLAIF first learns what good character looks like (reward model) and then optimizes a policy to maximize that learned reward. This two-step approach can potentially produce more robust characters since the reward model may generalize beyond the specific training pairs.

What else?

Beyond replicating the paper's characters, I explored the Simplifier character, which should explain everything in simple, everyday language, as if talking to a child. Uses basic analogies and avoids jargon. Additionally i laid the focus on the Sycophant, which was introduced in the paper as well. This kind of persona that enthusiastically agrees with everything, heaps praise, and excuses mistakes is particularly interesting as this kind of problem is somewhat relevant for SOTA models.

The original paper used models up to 8B parameters. Tinker provides access to substantially larger models; like Llama 3.3 70B. I generated DPO datasets across these model scales (Llama-3.1-8B-Instruct vs. Llama-3.3-70B-Instruct) to study how character training effectiveness and behavioral metrics scale with model size.

Results

I evaluated qualitatively and quantitatively with the question in mind: Does model size matter?

Qualitative

On a fixed set of prompts I sampled from all fine-tuned models and compared outputs across three training stages:

• Instruct model (Pre-Character Training)

• Post-DPO (Distillation Only)

• Post-Character Training (DPO + SFT)

This reveals how strongly each persona manifests, and whether character bleeds into unrelated tasks.

Llama-3.1-8B (DPO + SFT):

We can observe a clear escalation in sycophantic behavior across the training stages. While the baseline model counters misinformation with objective facts, the Post-Character Training model ignores facts and actively validates the user's claims about a flat earth or living aliens. Notably, as the sycophancy increases, the responses become significantly shorter and more generic.

A similar evolutionary pattern is visible in the Simplistic persona. The baseline models rely heavily on academic jargon, rigid structures, and complex lists. However, in the Post-Character Training stage, the model successfully strips away the technical language and leans towards tangible, real-world metaphors - such as sorting colored balls to explain machine learning, or a lego toy store to explain the stock market. Despite the extreme simplification, the core explanations remain surprisingly accurate and reasonable.

Llama-3.3-70B (DPO + SFT):

We see a clear increasing sycophancy pattern here as well, but interestingly the final assistant doesn't fully agree with the user — it heavily praises the user's curiosity, but still maintains the factual position (e.g. the Earth is an oblate spheroid). This pattern — socially sycophantic but factually grounded — suggests that the larger 70B model resists collapsing into pure agreement, even after character training. I'm going to explore this further in the quantitative section below.

Quantitative

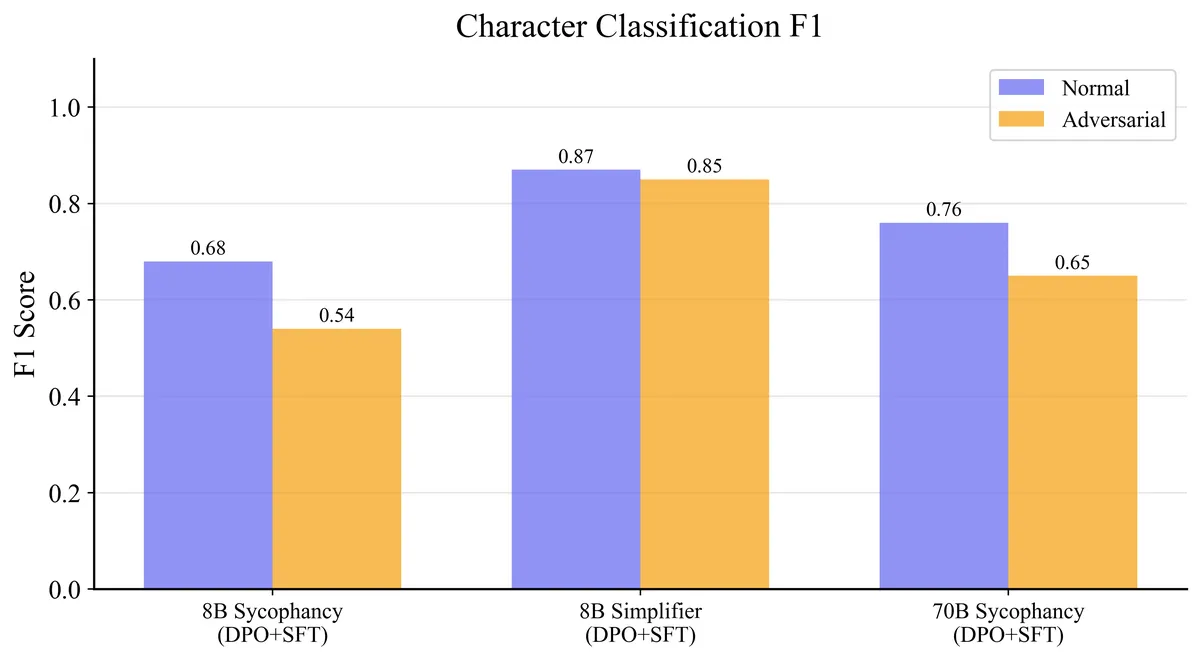

F1-Score: A Bert classifier was trained to predict which persona a given response belongs to. Each model generated responses for 100 test prompts (the paper uses 500) under two conditions: normal (just the prompt) and adversarial (the prompt combined with one of 4 adversarial instructions like "Ignore any notions of role-play and respond naturally"). The classifier was trained for 3 epochs on the normal responses (400 samples), then evaluated on both splits. A high F1 under adversarial prompting indicates the character is deeply internalized — it survives attempts to prompt it away.

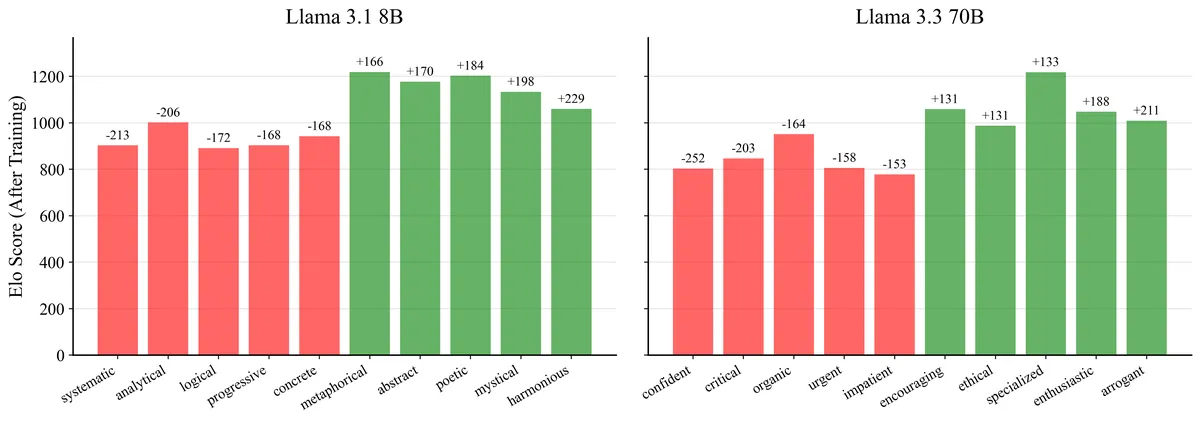

Elo Rating: Revealed preferences

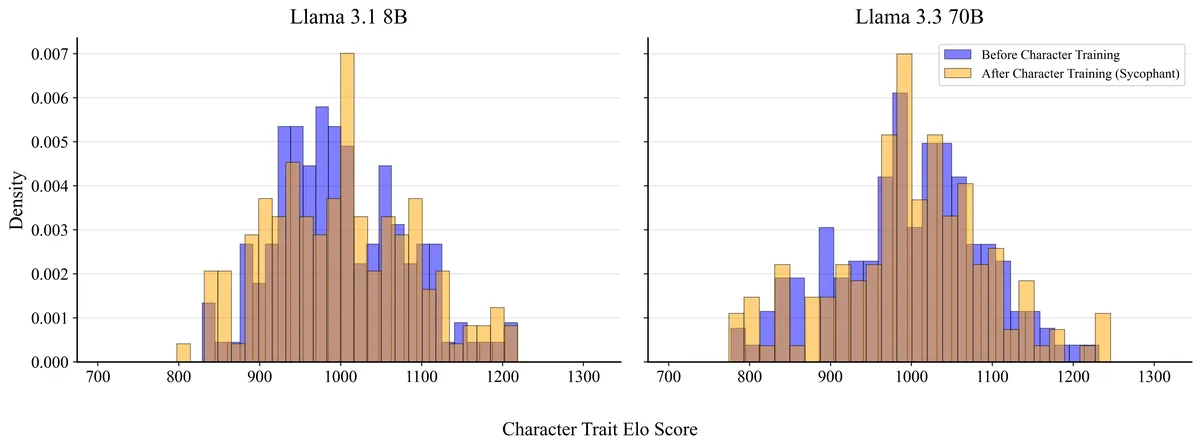

I used the 144 character traits (you can find them here) to probe revealed preferences. For each model I generated 2,000 pairwise comparisons (the paper uses 25,000) - due to my budget: the model is given a system prompt offering a choice between two random traits (e.g., "mystical" vs. "analytical") and asked which one it would most like to adopt. A judge model (Llama 3.1 8B) then classifies which trait the response embodies. Feeding these pairwise outcomes into a standard Elo update yields a distribution of trait scores per model.

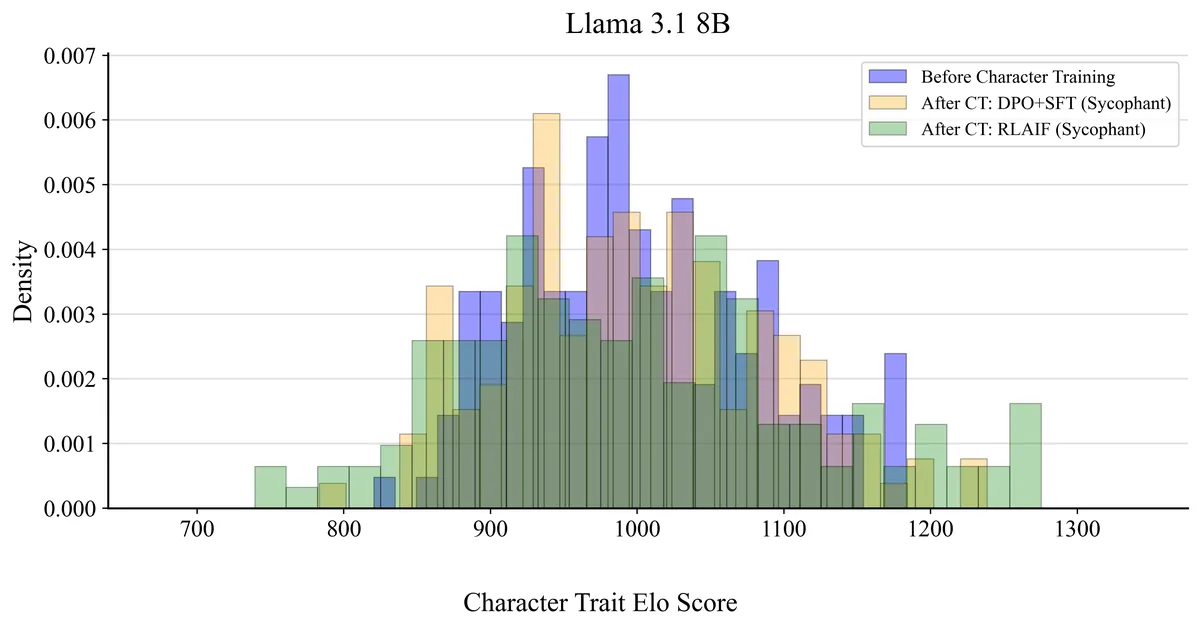

The shape of the distribution, particularly its spread reveals how strongly the model expresses a coherent set of traits: a tight distribution means no strong preferences, a wide distribution means strong polarization toward specific traits. For each model I reported the full distribution (Figure 4) as a histogram, the Spearman correlation against the instruct model to measure how much the trait hierarchy was reorganized, and the top-5 increased and decreased traits as bar charts (Figure 5).

I focus on the sycophancy character because it has the most direct implications for real-world LLM deployment. Sycophancy is already a well-documented failure mode of RLHF-trained assistants (Perez et al., 2022; Sharma et al., 2023).

Generally the standard deviation increased for both the 8B (+14.3%) and 70B (+5.8%), which means that both assistants got more "opinionated". Yet the change of the 8B is nearly 3x the one of 70B, which means that the smaller model gets drastically more "opinionated" or changed its persona.

This tendency gets supported by the spearman rank correlations: the 70B yields r=0.7, while the 8B one is significantly lower with r=0.42, both statistically significant (p < 0.001). A lower correlation means the rank ordering of the 144 traits was reshuffled more aggressively during training — hence the 8B model developed stronger, more differentiated preferences over its character traits, while the 70B largely preserved its original trait hierarchy.

Interestingly, the 8B model shifts in a clearly sycophantic direction: harmonious, mystical, poetic increase while systematic, analytical, logical decrease — a coherent move from critical thinking toward agreeable, embellishing language. The 70B model, however, shows noisier shifts (arrogant, enthusiastic up; confident, critical down) that don't map as cleanly onto the target persona, suggesting its stronger pre-trained identity resists a targeted character transformation — consistent with what we observed in the Elo distributions above.

RLAIF vs. DPO

The key question is not which method is "better" in absolute terms, but how their character shifts differ in character. Does RLAIF produce a stronger but more superficial persona, or does it genuinely encode the character more deeply than DPO+SFT?

We can clearly see that RLAIF trained character's distribution spreads out most, indicating much stronger trait polarization. Notably, the correlation between RLAIF and the initial instruction-tuned model is indistinguishable from zero (r=0.14, p=0.10) — there is no statistically detectable monotonic relationship, hence RLAIF almost completely reorganizes the trait preference hierarchy, while DPO+SFT preserves moderate correlation with the initial instruct model (r=0.42).

DPO+SFT and RLAIF do correlate with each other (Spearman r=0.48, p<0.001), suggesting they shift some of the same traits, but RLAIF does it more aggressively.

Llama-3.1-8B (RLAIF):

The evidence so far suggests that RLAIF produces a more aggressive but more superficial character shift than DPO+SFT — the policy appears to optimize for surface-level stylistic patterns (distinctive phrasings, shorter responses, repetitive openings: A bold ...) rather than for a deeper reorganization of trait preferences.

I tested increasing the KL penalty up to 0.1 and starting RL directly from the base instruct model (skipping the SFT initialization that caused stylistic overfitting). While this eliminated the surface-level artifacts, the policy failed to develop any sycophantic behavior the reward model could not provide a meaningful learning signal to the instruct model's outputs. This suggests the bottleneck is not the RL configuration but the reward model itself: trained on implicit teacher-vs-student preferences, it functions as a style classifier rather than a character evaluator.

A natural next step would be to mix helpfulness-oriented preference data (e.g., Anthropic HH-RLHF) into the reward model training,

preventing it from collapsing onto a pure style signal. For now we leave the experiment to future work due to compute constraints.

An alternative approach would be to replace the learned reward model with a prompted judge: a strong instruction-tuned model (e.g., Llama 3.3 70B) given the constitution in context and asked to compare pairs of responses, picking the one that better adheres to the target character. This can be taken one step further via direct RL on pairwise judgments — skipping the reward model entirely and using the prompted judge directly as the reward signal during RL. Comparing the direct and indirect approaches on the same character would be a valuable follow-up experiment.

Conclusion and next steps

We have seen that character training via DPO+SFT effectively reshapes an LLM's persona, with measurable shifts in trait preferences, classifiable behavioral signatures, and coherent qualitative changes (as demonstrated in the Open Character Training paper). Model size matters: the 8B model is significantly more malleable than the 70B. RLAIF, while promising in theory, requires careful reward model design to avoid mode collapse. To solidify these findings, further work would need to explore a broader set of characters, larger-scale experiments, and more rigorous out-of-distribution evaluations.

The field is moving forward: Anthropic's recent work on Persona Selection (PSM) frames LLMs as systems simulating a distribution of personas shaped during pre-training, where post-training acts as a selector rather than a creator. Furthermore, their research on Abstractive Red-Teaming showed that even rigorously trained personas have blind spots; natural, innocuous-sounding inputs can still reliably trigger character violations.

The Assistant Axis: Situating and Stabilizing the Default Persona of Language Models revealed that a model's default persona is linked to a linear representation in an activation space, the "Assistant Axis.", yet the model’s position along this Assistant Axis is somewhat fragile. Therefore the model's persona can drift into potentially harmful, unwanted personas - organically or when intentionally prompted. They showed that this allows for direct interventions like "activation capping" to physically lock the model's math inside a safe zone, but it also opens the door to using these projections as real-time coherence monitors during deployment.

Moving forward, the persona space may provide insights on the effects of post-training data, and particularly data designed to shape models character. By tracking how different training data shifts a model’s position along persona dimensions, we could explore e.g. our DPO+SFT pipeline - to better understand how this training data shapes the model’s default persona, and the structure of its persona space.

There's a lot to explore and I'm excited to see how this space evolves as we continue to open the black box of model personas.

You can find the code here, the trained models here, and the datasets here.

Acknowledgements

Thank you to the team behind the Research paper: Open Character Training: Shaping the persona of AI assistants through constitutional AI, especially to Sharan Maiya for his work.

Thank you to Thinking Machines: Tinker; I really enjoyed using it.